It might sound strange to some, but touchscreens aren't truly suitable to all sorts of displays, especially large monitors and TVs, and that makes Fujitsu's hands-free, eye tracking system a fairly intriguing development.

The reason we say this is because the technology opens the possibility of large-size panels skipping touch altogether.

Touch is good for handheld gadgets, but needs users to hold out their arms when applied to monitors and HDTVs. Naturally, this is tiresome.

In comparison, being able to relay certain commands just through eye movements would be very convenient.

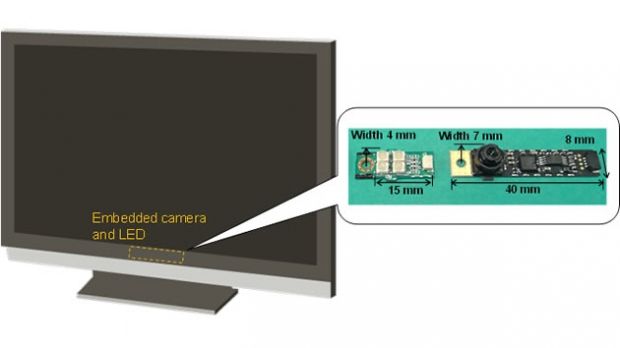

Fujitsu's eye tracking system, demonstrated at CEATEC Japan 2012, combines the abilities of a built-in camera and infrared LED.

When looking at a map application, moving the eyes left and right prompts the map view to change according to what the eyes seek. Looking up or down has the same effect, and other movements will cause even other responses eventually.

Seeing as how the tech is just in prototype stages, it is impressive that has Fujitsu managed to do even this much work already.

For those who want to know the mechanics behind the hands-free computing feature, the camera captures the reflection of light on the user's eye. After that, image processing technology calculates the user's viewing angle.

Based on those two factors, the movements of the eyes are interpreted and translated into navigation commands (panning, zooming, scrolling, etc.).

This is not the first eye-controlled technology, by any means, but it could be cheaper than most, as the components are integrated directly into the PC. All it takes is for some better calibration protocols to be implemented and mass production is ready to go.

Fujitsu means to commercialize the technology before the end of this year or in early 2013, both as a boon to disabled users and as a commodity for everyone willing to meet the price, whatever it turns out to be.

14 DAY TRIAL //

14 DAY TRIAL //